If you are running Spark on windows, you can start the history server by starting the below command. Now, start the spark history server on Linux or Mac by running. before you start, first you need to set the below config on nf Spark History servers, keep a log of all Spark applications you submit by spark-submit, spark-shell. On Spark Web UI, you can see how the operations are executed. Spark-shell also creates a Spark context web UI and by default, it can access from Spark Web UIĪpache Spark provides a suite of Web UIs (Jobs, Stages, Tasks, Storage, Environment, Executors, and SQL) to monitor the status of your Spark application, resource consumption of Spark cluster, and Spark configurations. You should see something like this below. Now open the command prompt and type pyspark command to run the PySpark shell. Winutils are different for each Hadoop version hence download the right version from PySpark shell

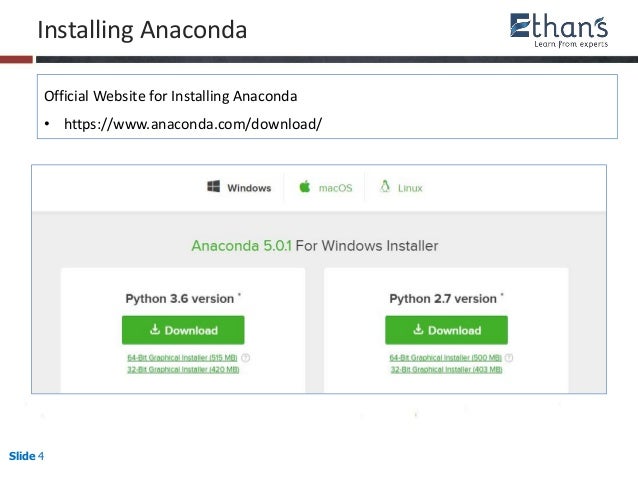

PATH=%PATH% C:\apps\spark-3.0.0-bin-hadoop2.7\binĭownload winutils.exe file from winutils, and copy it to %SPARK_HOME%\bin folder. Now set the following environment variables. If you wanted to use a different version of Spark & Hadoop, select the one you wanted from drop downs and the link on point 3 changes to the selected version and provides you with an updated link to download.Īfter download, untar the binary using 7zip and copy the underlying folder spark-3.0.0-bin-hadoop2.7 to c:\apps PATH = %PATH% C:\Program Files\Java\jdk1.8.0_201\binĭownload Apache spark by accessing Spark Download page and select the link from “Download Spark (point 3)”. Post installation, set JAVA_HOME and PATH variable. To run PySpark application, you would need Java 8 or later version hence download the Java version from Oracle and install it on your system. Follow instructions to Install Anaconda Distribution and Jupyter Notebook. I would recommend using Anaconda as it’s popular and used by the Machine Learning & Data science community. Install Python or Anaconda distributionĭownload and install either Python from or Anaconda distribution which includes Python, Spyder IDE, and Jupyter notebook. Since most developers use Windows for development, I will explain how to install PySpark on windows. In order to run PySpark examples mentioned in this tutorial, you need to have Python, Spark and it’s needed tools to be installed on your computer. This page is kind of a repository of all Spark third-party libraries.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed